Randomised trials remain the cornerstone of evidence in interventional radiology (IR), yet they are resource intensive and slow to yield results. Retrospective studies provide a practical complement by leveraging existing clinical data to describe real-world practice, capture rare events and long-term outcomes, and generate hypotheses efficiently. Building on our recent review in the journal CardioVascular and Interventional Radiology (CVIR), this opinion emphasises pragmatic steps to strengthen transparency and credibility, making retrospective findings more reliable and useful for everyday interventional oncology (IO) decision making.1

While randomised controlled trials remain the benchmark, they are costly and slow to design and complete. Retrospective clinical studies therefore offer a practical, complementary source of real-world evidence in interventional oncology: leveraging existing data to provide timely insights, cover broad patient populations, and illuminate long-term and rare outcomes in the diverse patients we treat.2 The key question is no longer whether such studies are ‘inferior’, but whether they are specified and analysed rigorously enough to inform the clinical decision at hand. By prespecifying a target trial framework, addressing confounding with modern methods, and reporting transparently, high quality retrospective cohorts can complement—and often guide—prospective research in IO.1

The critique is familiar and warranted: confounding, selection bias, missingness, variable data quality, and limited generalisability.3 Retrospective observational studies should not be sold as causal proof, but the right question is no longer ‘are they useful?’, it is ‘are they credible enough for the decision at hand?’

Credibility starts with intent

Predefine the question, population, index date, and outcomes. Then, emulate the target trial you wish you could run. Align follow up, specify treatment strategies, and guard against biases. Use modern statistical adjustment and show your homework with sensitivity analyses for unmeasured confounding.4,5 Report transparently and reproducibly, adhering to the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) checklist and share protocols, code, and curated data dictionaries.6,7

Infrastructure matters

Multicentre registries and common data models lift us beyond single institution idiosyncrasies.8,9 Structured IO reporting and core outcome sets add granularity that chart reviews lack. Natural language processing can unlock procedural nuance from notes; linkage to claims and registries extends follow up. When prospective trials are infeasible, high quality retrospective cohorts can serve as external controls for single arm device studies, while also seeding pragmatic trials where equipoise remains.

The path forward is pragmatic; journals and societies can help by enforcing prespecified analysis plans, target trial checklists, and complete case ascertainment standards. Clinicians should read effect sizes and uncertainty, not just p values; policymakers should weigh coherence across retrospective series, mechanistic plausibility, and patient important outcomes.

Done poorly, retrospective studies mislead. Done well, they shape practice, prioritise trials, and inform policy in ways randomised controlled trials alone cannot. In IO, that is not a compromise, it is how we responsibly learn from our patients to do better for the next patients.

References:

- Uhlig J, Kroencke T, Kim HS. Retrospective Clinical Studies in Interventional Oncology: Relevance and Challenges. Cardiovasc Intervent Radiol. 2025/08/06 2025;doi:10.1007/s00270-025-04143-2

- Bilhim T, Costa NV, Torres D, et al. Long-Term Outcome of Prostatic Artery Embolization for Patients with Benign Prostatic Hyperplasia: Single- Centre Retrospective Study in 1072 Patients Over a 10-Year Period. Cardiovasc Intervent Radiol. Sep 2022;45(9):1324-1336. doi:10.1007/s00270-022- 03199-8

- Kuo TM, Mobley LR. How generalizable are the SEER registries to the cancer populations of the USA? Cancer Causes Control. Sep 2016;27(9):1117- 26. doi:10.1007/s10552-016-0790-x

- Hernán MA, Hernández-Díaz S, Robins JM. A structural approach to selection bias. Epidemiology. Sep 2004;15(5):615-25. doi:10.1097/01. ede.0000135174.63482.43

- Hernán MA, Robins JM. Using Big Data to Emulate a Target Trial When a Randomized Trial Is Not Available. Am J Epidemiol. Apr 15 2016;183(8):758- 64. doi:10.1093/aje/kwv254

- Page MJ, McKenzie JE, Bossuyt PM, et al. The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. J Clin Epidemiol. Jun 2021;134:178-189. doi:10.1016/j.jclinepi.2021.03.001

- von Elm E, Altman DG, Egger M, et al. The Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) statement: guidelines for reporting observational studies. Lancet. Oct 20 2007;370(9596):1453-7. doi:10.1016/ s0140-6736(07)61602-x

- Uhlig J, Kokabi N, Xing M, et al. Ablation versus Resection for Stage 1A Renal Cell Carcinoma: National Variation in Clinical Management and Selected Outcomes. Radiology. Sep 2018;288(3):889-897. doi:10.1148/radiol.2018172960

- Uhlig J, Strauss A, Rücker G, et al. Partial nephrectomy versus ablative techniques for small renal masses: a systematic review and network meta-analysis. Eur Radiol. Mar 2019;29(3):1293- 1307. doi:10.1007/s00330-018-5660-3

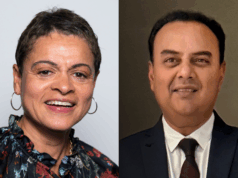

Johannes Uhlig is a medical doctor at Universitätsmedizin Göttingen, in Göttingen, Germany, Hyun S Kim is a professor of radiology at the University of Maryland Medical Centre, Baltimore, USA, and Thomas Kroencke is a professor of diagnostic and interventional radiology at the University Hospital Augsburg, Augsburg, Germany.